Conversation Analysis

WorkWave

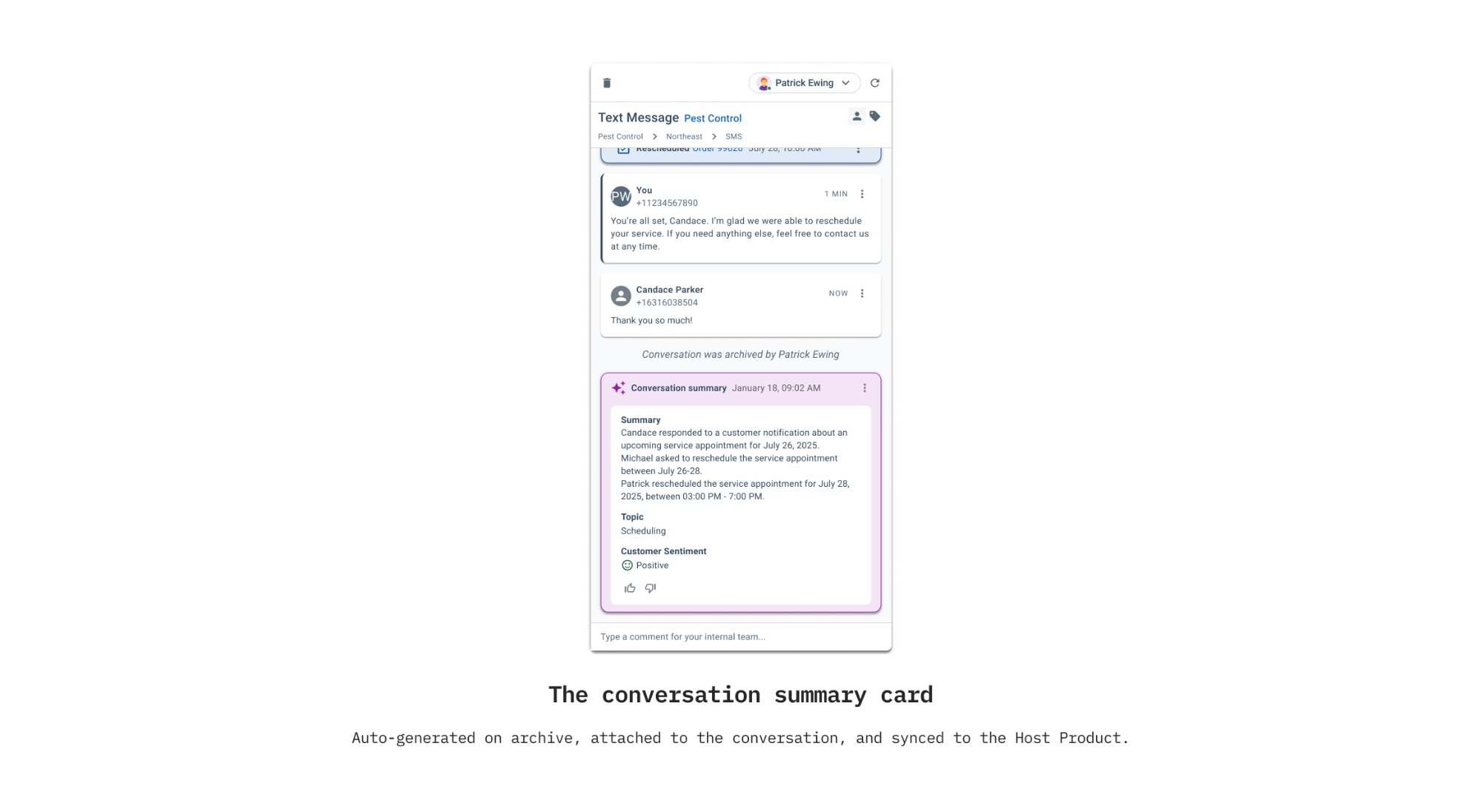

Turning thousands of customer conversations into structured insight, generated automatically, without slowing agents down.

The Insight Was Always There

Inside WorkWave's Communication Center, teams handle thousands of customer conversations containing signals about sentiment, recurring issues, and service history. That insight was usually lost unless agents manually summarized interactions and copied notes into the host CRM, PestPac. Documentation was inconsistent, records fell out of sync, and managers had no scalable way to understand trends.

I led end-to-end design for Conversation Analysis, partnering with product and engineering to explore how automatic summaries, topics, and sentiment could preserve that knowledge without adding work. From the start we aligned on a clear direction: automation should remove steps, not create them. The feature had to live inside existing workflows, keep humans in control of the final record, and sync insight automatically so records stayed aligned. AI assists — it doesn't override.

Discovery & Research

I mapped how conversations were actually closed in production. A series of customer interviews, and workflow reviews via Pendo metrics showed manual summaries were often skipped because agents prioritize speed. Managers confirmed they had no scalable way to understand patterns across conversation interactions, insight lived in raw message history, not structured data.

The key insight: AI would only be adopted if it attached itself to behavior agents already perform. Any extra dashboard or review queue would fail. That finding anchored the direction: analysis had to run in the background, appear at the right moment, and stay editable so humans retained final control.

Early Concepts

Early exploration focused on flows and permissions. Design, product, and engineering collaborated on a consent model that rolled Communication Center settings into CRM controls, ensuring company and customer opt-in were explicit. Because the feature touched AI and customer data, transparency and permissions were treated as core UX problems, not legal checkboxes.

From there, I explored multiple entry points for automated summaries, including an always-available trigger, which we rejected due to system cost and behavioral risk. Summaries needed to feel purposeful, not like a novelty action, so we anchored analysis to the wrap-up phase. This was complicated by the fact that Communication Center isn't a phone provider. We support phone integrations, meaning summaries could only generate after a call ended and a transcription was available. That constraint, across all channels and varying data quality, made editable outputs and clear system states essential from the start.

Iterations & Decisions

With a working proof of concept, we launched a closed beta with enterprise customers to validate trust and usefulness. I partnered with product and support to review ratings across summaries, topics, and sentiment, targeting ~80% accuracy as an early viability benchmark. Separate rating controls helped isolate weak areas and guide iteration.

As confidence grew throughout the Beta, decisions shifted from whether the AI worked to how it should behave in production. I worked with the engineering team to establish refined editing rules, limiting topic and sentiment changes to admins to protect reporting integrity. Close collaboration with engineering ensured the experience stayed lightweight while syncing results end-to-end with the CRM. Generated summaries automatically update records and remain pinned to conversation history, making insight visible without adding new steps.

Outcomes

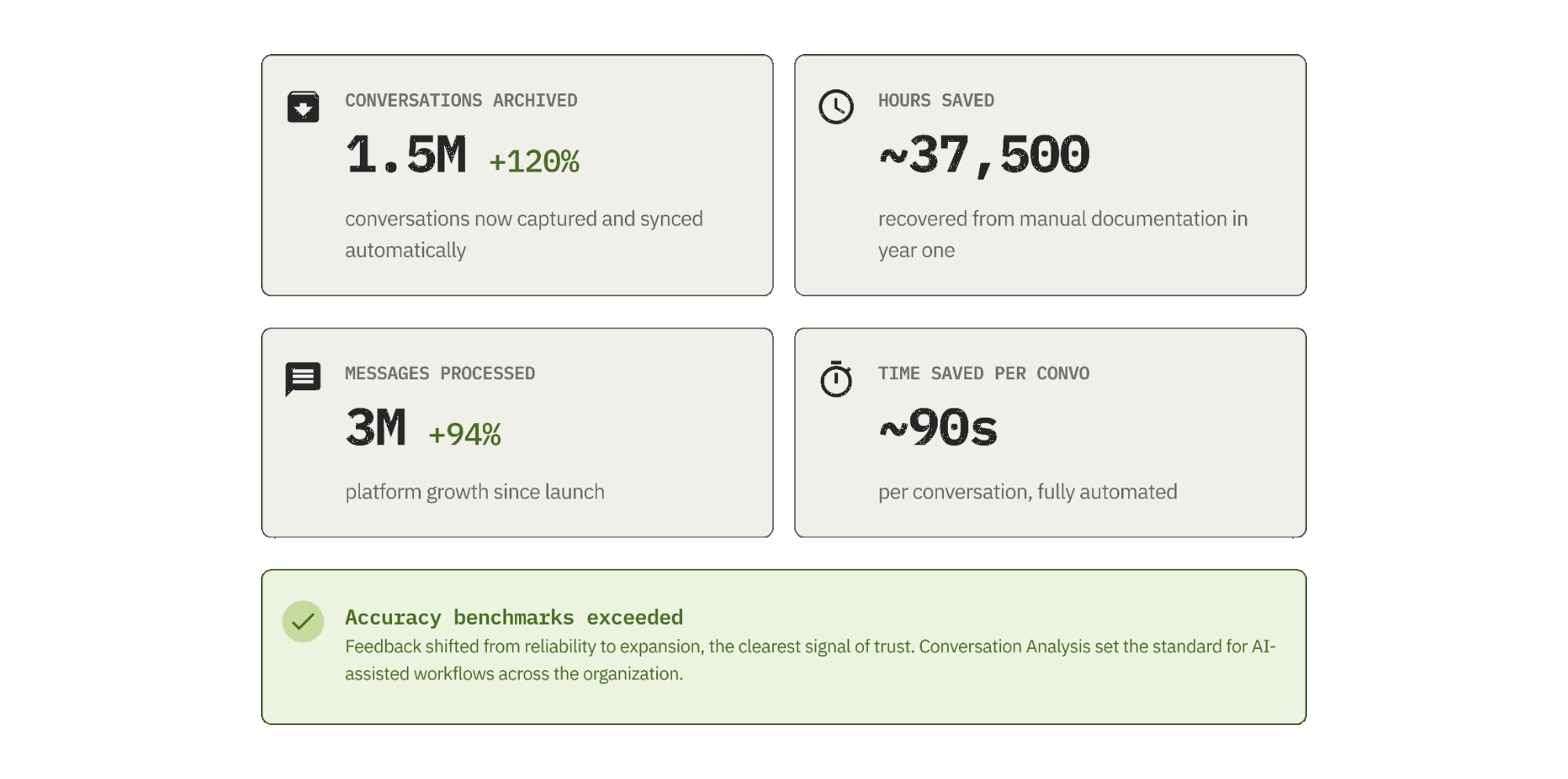

Conversation Analysis launched in early 2025 and gained traction immediately. Accuracy benchmarks were exceeded, and customer feedback focused on expansion rather than reliability, a strong signal of trust. Adoption scaled alongside platform growth: 3M+ messages processed (+94% YoY) and 1.5M archived conversations (+120% YoY).

Each archived conversation previously required ~90 seconds of documentation. Automation translated to an estimated 37,500 hours saved in one year. Agents spent less time copying notes across systems, while managers gained more consistent records without enforcement overhead. The feature connected daily operations to long-term reporting without changing user behavior, establishing a scalable model for AI workflows that improve data quality while respecting frontline speed.

Takeaways

- Agents adopted the feature without any change to their workflow. Anchoring analysis to the archive step, something agents already did, meant there was nothing new to learn.

- This wasn't a single-team project. Design decisions around editing permissions and CRM sync had downstream effects on two engineering teams. Keeping those surfaces consistent required constant communication, not just handoff.

- Generation is fully automatic, but agents can edit summaries after the fact. The feature handles the 90% case while keeping humans in control of the final record, striving for assistive AI, not prescriptive AI.

- The most effective flows weren't the most inventive ones. Early concepts tried to do too much. Cleaner solutions came from stripping back to the actual job to be done and designing within the constraints of the existing system rather than around them.

- Cross-functional collaboration across time zones introduced real coordination overhead. The moments where the project stalled or needed rework almost always traced back to a communication gap, not a design problem. Over-communicating intent and decisions early saved more time than it cost.